The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Kappa values for interobserver agreement for the visual grade analysis... | Download Scientific Diagram

Intra and Interobserver Reliability and Agreement of Semiquantitative Vertebral Fracture Assessment on Chest Computed Tomography | PLOS ONE

Inter-observer agreement and reliability assessment for observational studies of clinical work - ScienceDirect

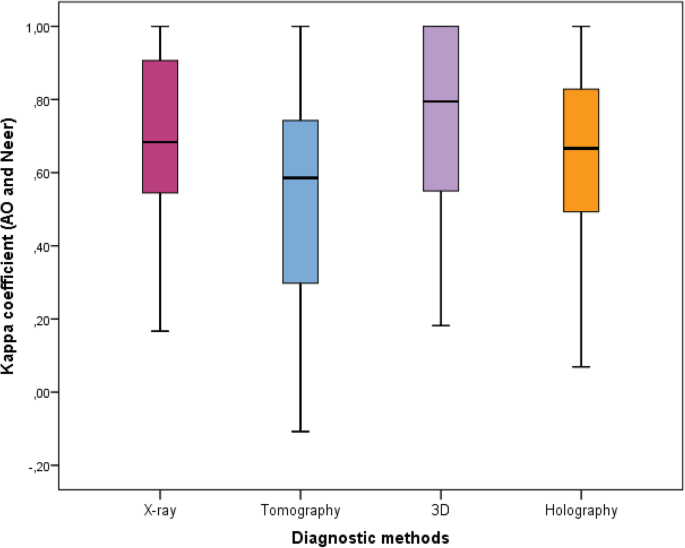

Inter-observer reliability of alternative diagnostic methods for proximal humerus fractures: a comparison between attending surgeons and orthopedic residents in training | Patient Safety in Surgery | Full Text

Average Inter-and Inter-Observer with Kappa and Percentage of Agreement... | Download Scientific Diagram

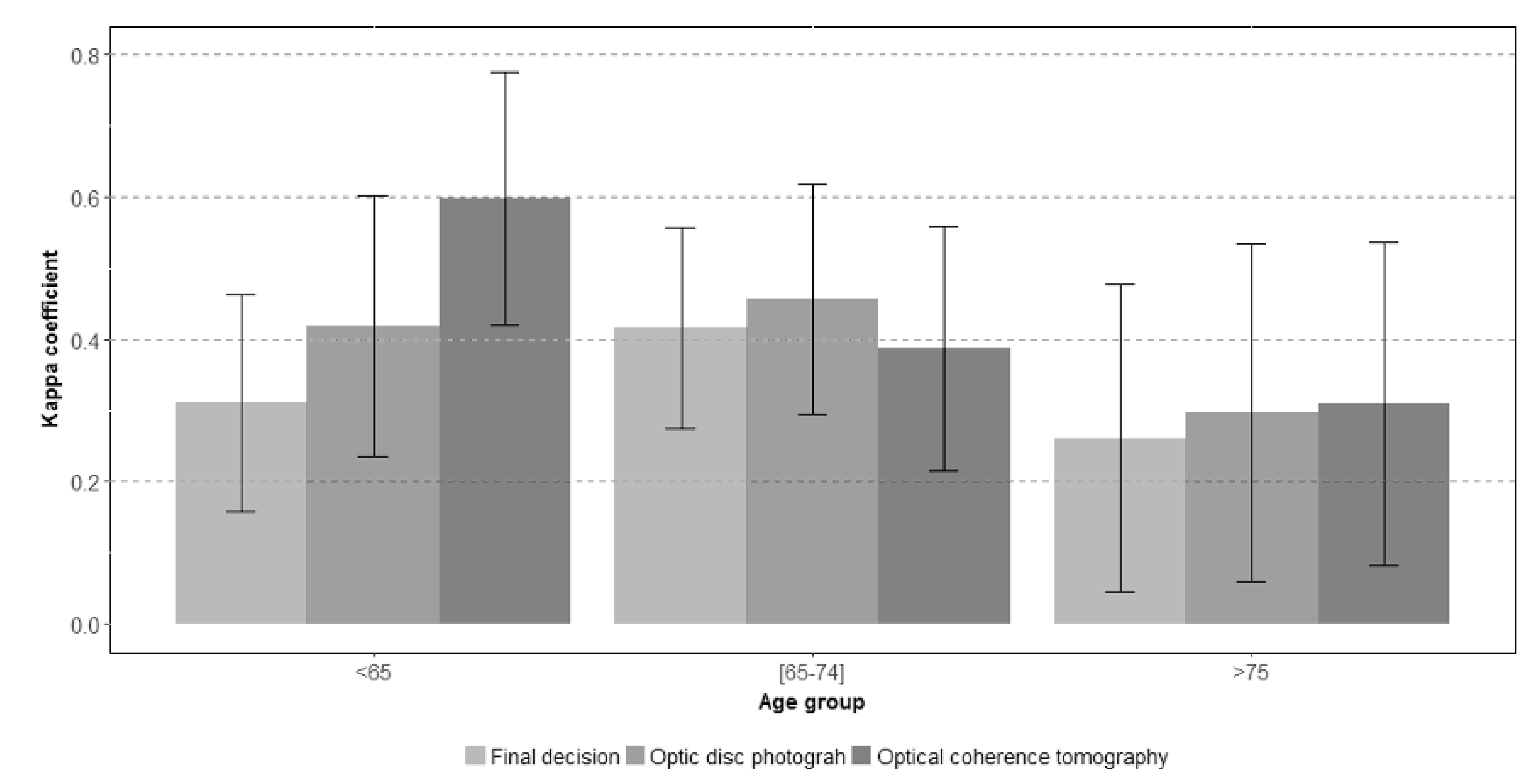

JCM | Free Full-Text | Interobserver and Intertest Agreement in Telemedicine Glaucoma Screening with Optic Disk Photos and Optical Coherence Tomography

Inter-Rater Reliability: Kappa and Intraclass Correlation Coefficient - Accredited Professional Statistician For Hire

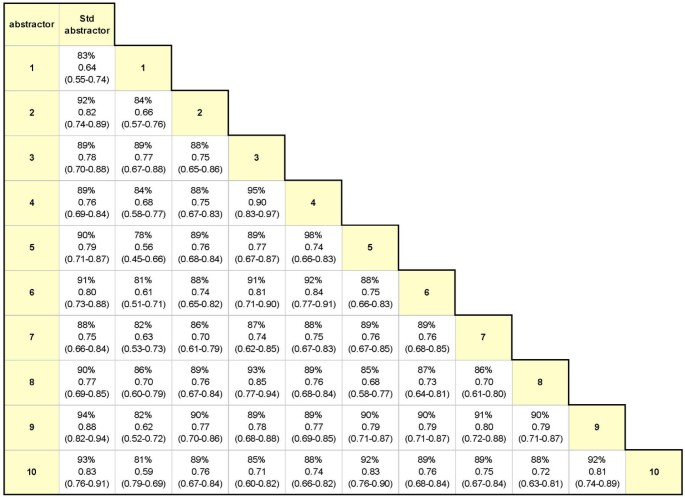

Examining intra-rater and inter-rater response agreement: A medical chart abstraction study of a community-based asthma care program | BMC Medical Research Methodology | Full Text

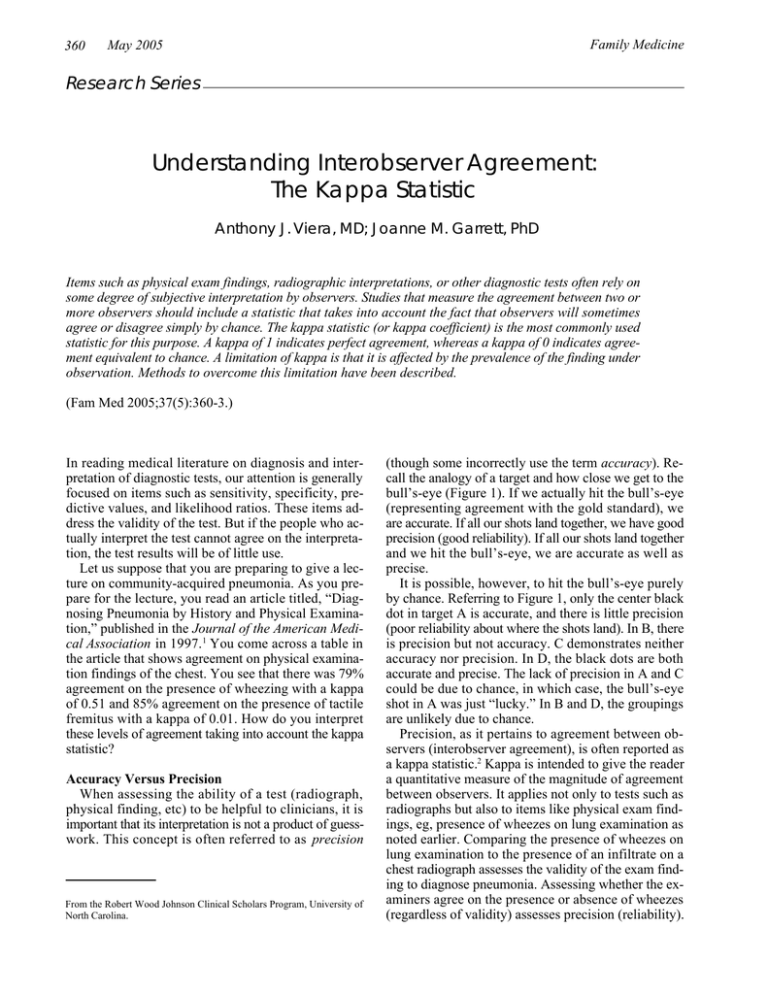

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table2-1.png)

![PDF] Interrater reliability: the kappa statistic | Semantic Scholar PDF] Interrater reliability: the kappa statistic | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/bf3a7271860b1667e3ceb84e5bc400d2635ff8b7/3-Table2-1.png)